Fine tuning LLMs for Search

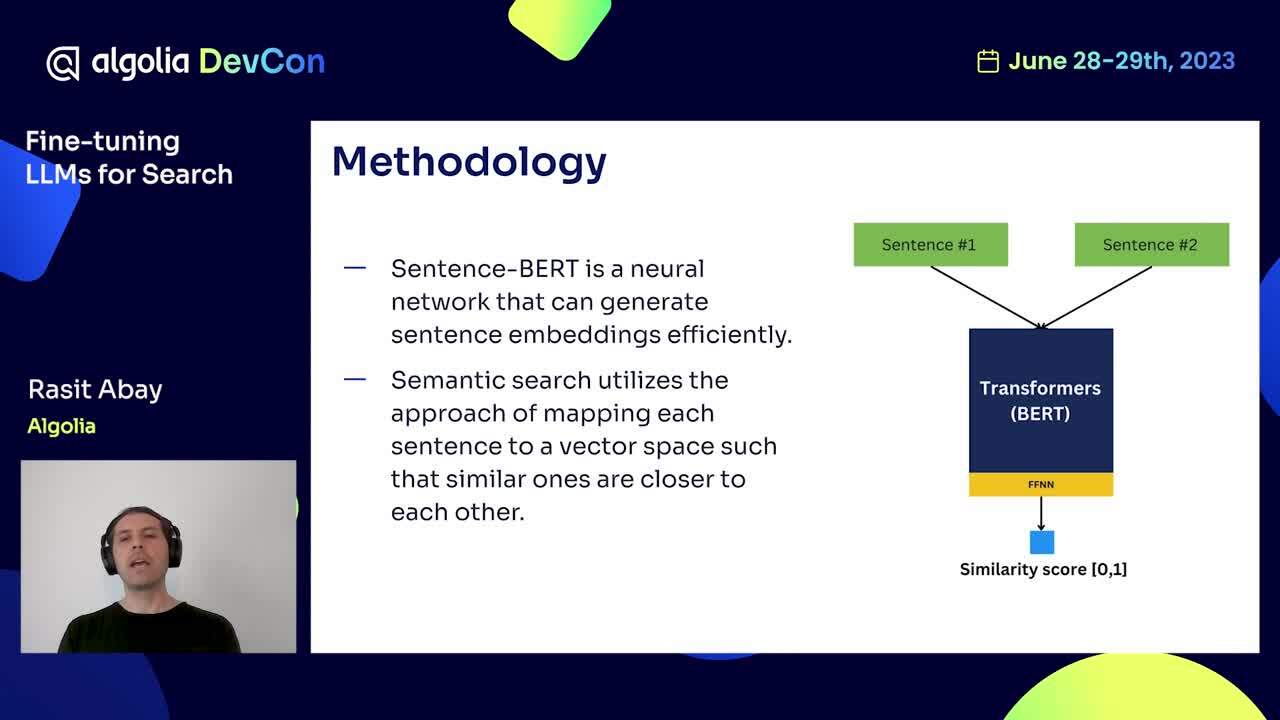

Large Language Models (LLMs) have been put in the spotlight by generative AI solutions like ChatGPT. But, LLMs are also used in Algolia AI-powered search. Large language models are huge pre-trained machine learning models powered by neural networks.

At Algolia, we can employ different AI models to improve language understanding, which in turn improves search results. In this video, we will explain how language understanding can be improved by using supervised learning with the Contrastive Loss. We took Amazon ESCI datasets and ran them through our LLMs, then validated to determine how much better search results would be with the new model versus the default pre-trained models. While we're only showing a supervised learning approach for fine-tuning, we leverage other learning methods, such as reinforcement learning, for even greater accuracy.

Presented by Rasit Abay, Sr. Data Scientist at Algolia

Learn more about Algolia on https://algolia.com/developers